What Are Altmetrics and Why Do We Need Them?

Are tweets and blog posts the new citations? The advent of social networks has undoubtedly changed scholarly communication, and scientific impact can no longer be determined by traditional bibliometrics alone. If you haven’t already, it’s time to get familiar with alternative metrics!

This post is part of a series that provides practical information and resources for academic authors and editors.

Today’s scientific publication landscape seems more complex than ever, but its immense growth was already evident in the middle of the last century. In 1963, the British historian of science Derek de Solla Price pointed out in his book “Little Science, Big Science” that the number of scientific publications and journals had increased almost exponentially since their first appearance. In this context, his quantitative analyses were a new phenomenon.

Around the same time, the information scientist Eugene Garfield developed his model of a science index, which made it possible for the first time not only to search for literature bibliographically or thematically, but also to find relevant publications through citation analyses. This marked the birth of bibliometrics and the Science Citation Index, which is still used today to calculate the Journal Impact Factor.

Over the years, citations have become a currency in its own right in many areas of science, especially in the STEM subjects, both in terms of individuals and scientific journals.

Although the use of bibliometrics is widespread, it is not without controversy, and it raises the question “Can science be evaluated?” Even advocates of bibliometric analysis are critical of its use in some respects, as evidenced by the “Leiden Manifesto on Research Metrics,” which postulates “ten commandments” regarding adherence to basic principles in research evaluation and calls for the consideration of qualitative aspects as well.

At the same time, individual human resources departments are requiring applicants for professorial positions to provide an h-index, thus relying largely on a single bibliometric indicator. Despite its 15 years of existence and great success, the sole use of the h-index is viewed very critically. Nonetheless, quantitative methods can be a good starting point for strategic projects in innovation management.

A Fresh Breeze in Bibliometrics

With the advent of social networks, scientific communication has changed and expanded. Scientific impact is no longer determined solely in terms of traditional scientific publications, but also by how the publications are perceived in online media. Consequently, there is a need for supplementary bibliometric methods to answer the following questions:

How do tweets, Facebook posts, blog posts and news stories about scientific publications affect their scientific impact? How do social media and other online media contribute to the formation of knowledge — or, viewed from the other direction, how do they contribute to the knowledge transfer that is demanded by politics, business, and society?

“Altmetrics make scientific impact visible more quickly than traditional bibliometric metrics do because they evolve more quickly and dynamically.”

Answers could be provided by alternative metrics, so-called altmetrics. They complement traditional bibliometrics with citation statistics from social media platforms and news channels. Thus, altmetrics can be compared to the introduction of the Science Citation Index, which enabled scientists for the first time to track where they have been cited. The only difference is that now these citations are called tweets, posts, likes or mentions.

Altmetrics make scientific impact visible more quickly than traditional bibliometric metrics do because they evolve more quickly and dynamically. Unlike the traditional system, the citing articles do not undergo peer review, and with each new document type, such as preprints, new parameters come into play.

How Relevant Are Alternative Channels?

Currently, scientists are mainly rewarded (e.g. through grants or improved career opportunities) for publishing their work in peer-reviewed scientific journals. But science has changed: young scientists in particular are not only publishing their work in journals but also blogging, podcasting, tweeting, and using other social media channels to share their research with the world.

Bibliometrics and altmetrics are not separate systems; instead, they are interconnected. In a recent study, we found that if one were to take a stack of scholarly publications and cognitively assess their relevance, many of the publications deemed highly relevant would receive high citation counts as well as a high altmetric score.

“If a paper is relevant, it is relevant not only at one level but at all levels.”

This suggests that relevance comes in different forms. If a paper is relevant, it is relevant not only at one level but at all levels. Relevance is inherent in the work (because of the topic being studied, the results, the writing style, etc.) This is also reflected in the fact that skewed distributions of citations, likes, tweets, mentions, etc. of publications are identically present in both systems.

Push vs. Pull

The power of social media and other platforms on the internet enables science to exert a direct influence on society, to receive references to relevant work sooner, and thus to enter into discourses earlier. It also enables scientists to enhance the reputation of their subject as well as themselves.

Consider the (German-language) podcast “Das Coronavirus-Update” by Dr. Christian Drosten, the head of virology at Berlin’s Charité hospital. The podcast was not relevant to his reputation as a scientist. However, because of its format it increased his social relevance regarding the topics of virology and epidemiology to such an extent that today he can no longer be left out of any panel of experts.

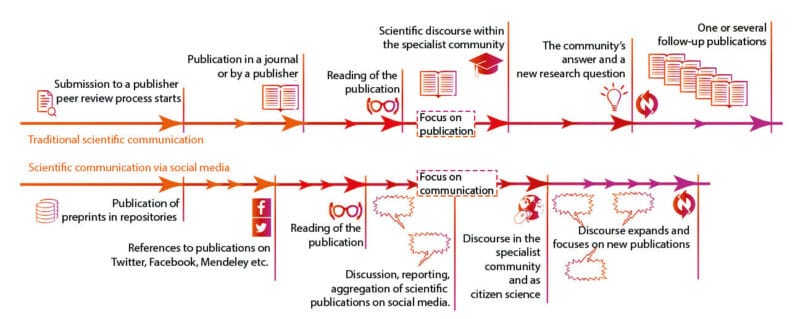

Whereas scientific communication was traditionally focused on publication, nowadays through social media it is turning its focus toward communication. The increasing use of altmetrics is changing scientific communication and making its perception in social media a firmly established component of scientific impact.

This ultimately also changes people’s search behavior regarding scientific literature. Whereas in the past they actively searched for literature about a certain topic (“pull”), in the future they will also receive it when others recommend it, explain it, and make it accessible (“push”). Social media helps to reduce the effort required to access certain publications, and that increases their relevance for others. Social media is thus a new component of scientific communication — a contribution made by a generation of digital natives.

Learn more in this related title from De Gruyter

[Title image by Urupong/iStock/Getty Images Plus